Introduction

Government organizations struggle to process large volumes of legislative bills, with manual tasks like data collection, verification, and summarization delaying critical, timely insights. Metrum AI presents a comprehensive solution to address these challenges by leveraging small language models (SLMs) and agentic retrieval-augmented generation (RAG) techniques to streamline legislative bill analysis. This solution enables organizations to manage legislative workloads more efficiently by taking advantage of AI agents - intelligent systems that can operate semi-autonomously to complete offline, batched tasks such as summarization and validation of legislative data - all while running on the latest Dell PowerEdge servers equipped with 5th Gen AMD EPYC 9755 128-Core processors, ensuring scalability and performance.

By running entirely on CPUs, this solution empowers organizations to leverage existing CPU-based infrastructure for advanced AI workflows, avoiding the need for specialized hardware investments. CPUs not only support mixed workloads but are also widely available in most organizational setups, reducing the cost and complexity of deployment. This legislative bill analysis solution demonstrates that modern CPU-based systems powered by AMD EPYC processors can efficiently handle full agentic RAG workflows, with proven scalability across industries including manufacturing, healthcare, transportation, and telecommunications.

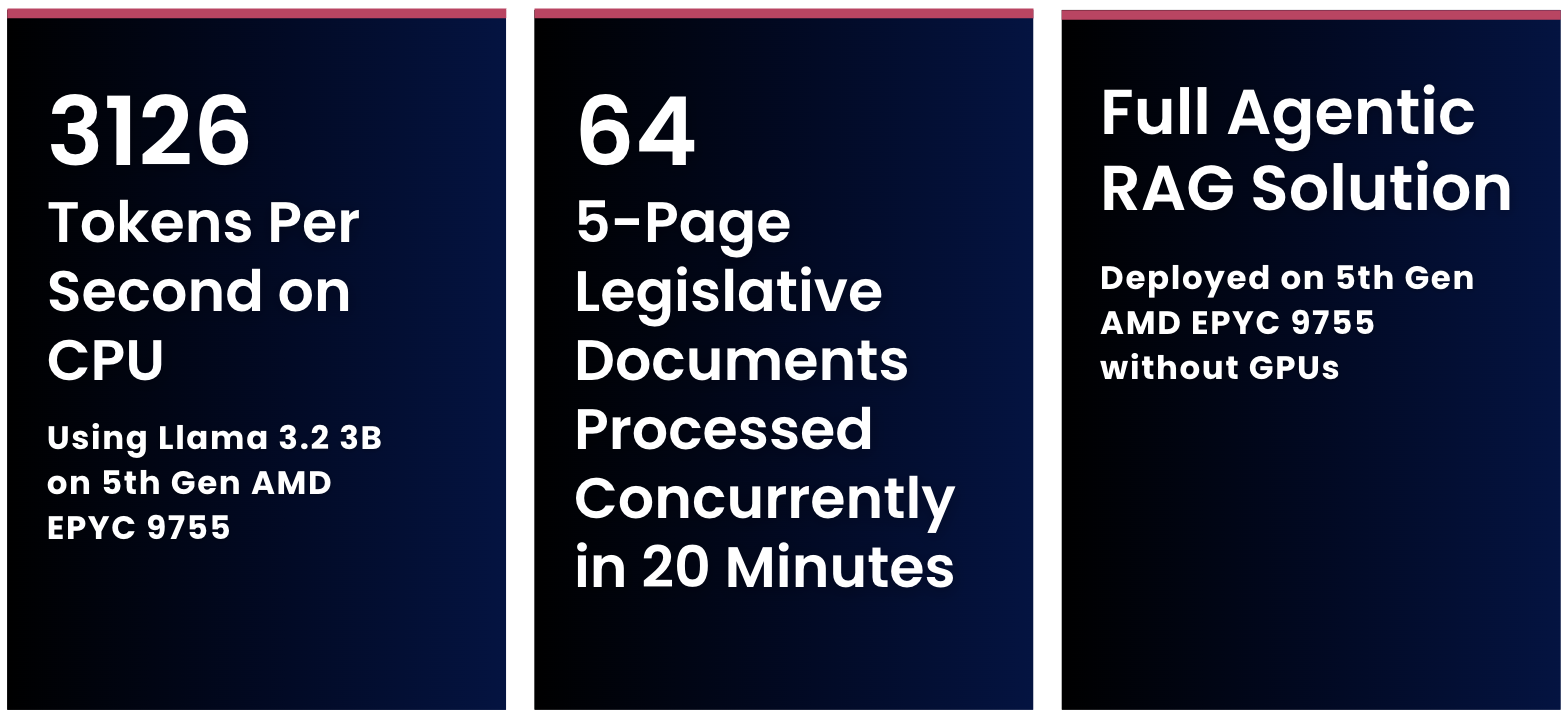

Key Highlights

- AI agents concurrently processed 64 five-page legislative bill documents in 20 minutes

- Developed and deployed full agentic RAG stack with SLMs using 5th Gen AMD EPYC 9755 128-Core Processors without GPUs

- Deployed Llama 3.2 3B, achieving a total throughput of 3126 Tokens/Second while supporting 2048 concurrent requests on 5th Gen AMD EPYC 9755 128-Core Processors

Solution Overview

Metrum AI's legislative assistant streamlines the analysis of legislative bills by using specialized AI agents to automate critical tasks like assessing economic impact, legal compliance, and social or environmental effects. This approach combines AI-driven efficiency with human oversight, enabling analysts to verify findings and make adjustments, resulting in reliable and comprehensive reports.

The solution first allows users to upload draft bills and submit a job for the bills to be processed, after which it generates a comprehensive summary for each bill. Existing legislation and code documents are converted to embeddings and processed into a vector database for retrieval, and targeted AI agents then analyze the bills for economic, social, and legal impact and compliance, leveraging existing legislation and code documents for RAG. Using a state of the art SLM, Llama 3.2 3B, for text generation, the solution then outputs a bill analysis report identifying specific sections of the bill to be amended, with references to original documents. With a user-friendly interface that includes real-time performance monitoring and streamlined report generation, Metrum AI's legislative assistant improves productivity and allows teams to prioritize high-impact legislative tasks.

Figure 1. User Interface for Legislative Bill Analysis Solution.

Performance Benchmarks

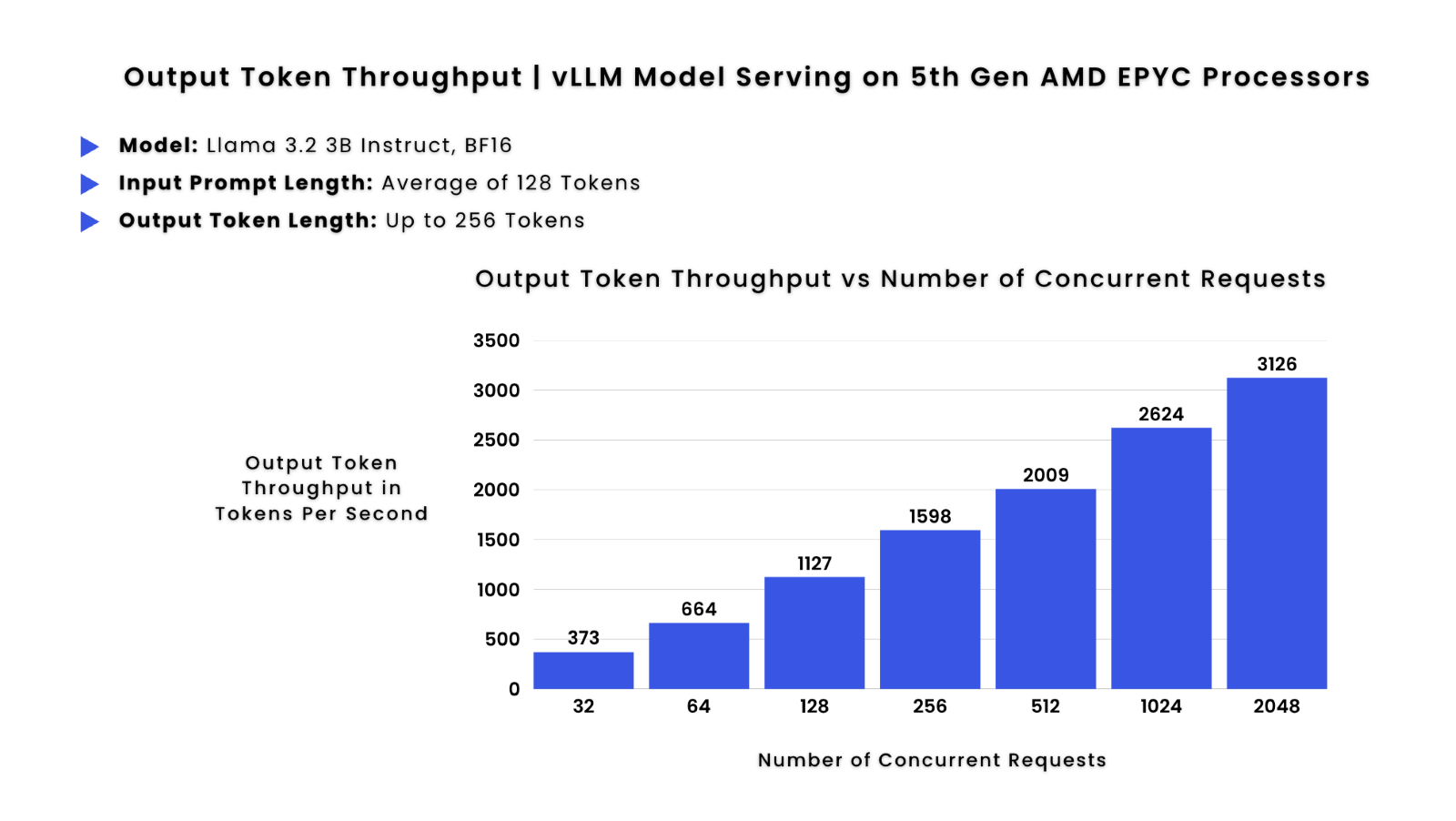

This seamless workflow required a hardware platform that ensured optimal performance and scalability, prompting a thorough evaluation of infrastructure options. Before finalizing the Dell PowerEdge R7725 server with 5th Gen AMD EPYC Processors as the hardware of choice, we conducted vLLM-based performance tests to evaluate throughput in tokens per second using the Llama 3.2 3B model - an industry-leading SLM integral to this solution. In these tests, throughput increases consistently with the number of concurrent requests:

Figure 2. vLLM Model Serving of Llama 3.2 3B with BF16 Precision, showing throughput in tokens per second versus number of concurrent requests.

| Metric | 32 Concurrent Requests | 256 Concurrent Requests | 2048 Concurrent Requests |

|---|---|---|---|

| Total Throughput | 373 Tokens/Second | 1599 Tokens/Second | 3126 Tokens/Second |

| Per-Request Throughput | 11.66 Tokens/Sec/Request | 6.25 Tokens/Sec/Request | 1.53 Tokens/Sec/Request |

As shown above, lower concurrency scenarios (32 concurrent requests) yield a per-request throughput greater than 10 tokens/second, an industry standard threshold for interactive applications such as chatbots, whereas higher concurrency scenarios (2048 concurrent requests) yield a per-request throughput of 1.53 tokens/second, sufficient for supporting offline batched processing tasks such as document summarization or long content generation. Since our solution leverages AI agents to process documents in batch rather than requiring real-time performance, these results further emphasize that we can efficiently complete legislative analyses faster than traditional, non-agentic approaches without compromising performance - thanks to the high memory capacity and processing power of the EPYC 9755 processors paired with Dell PowerEdge Servers.

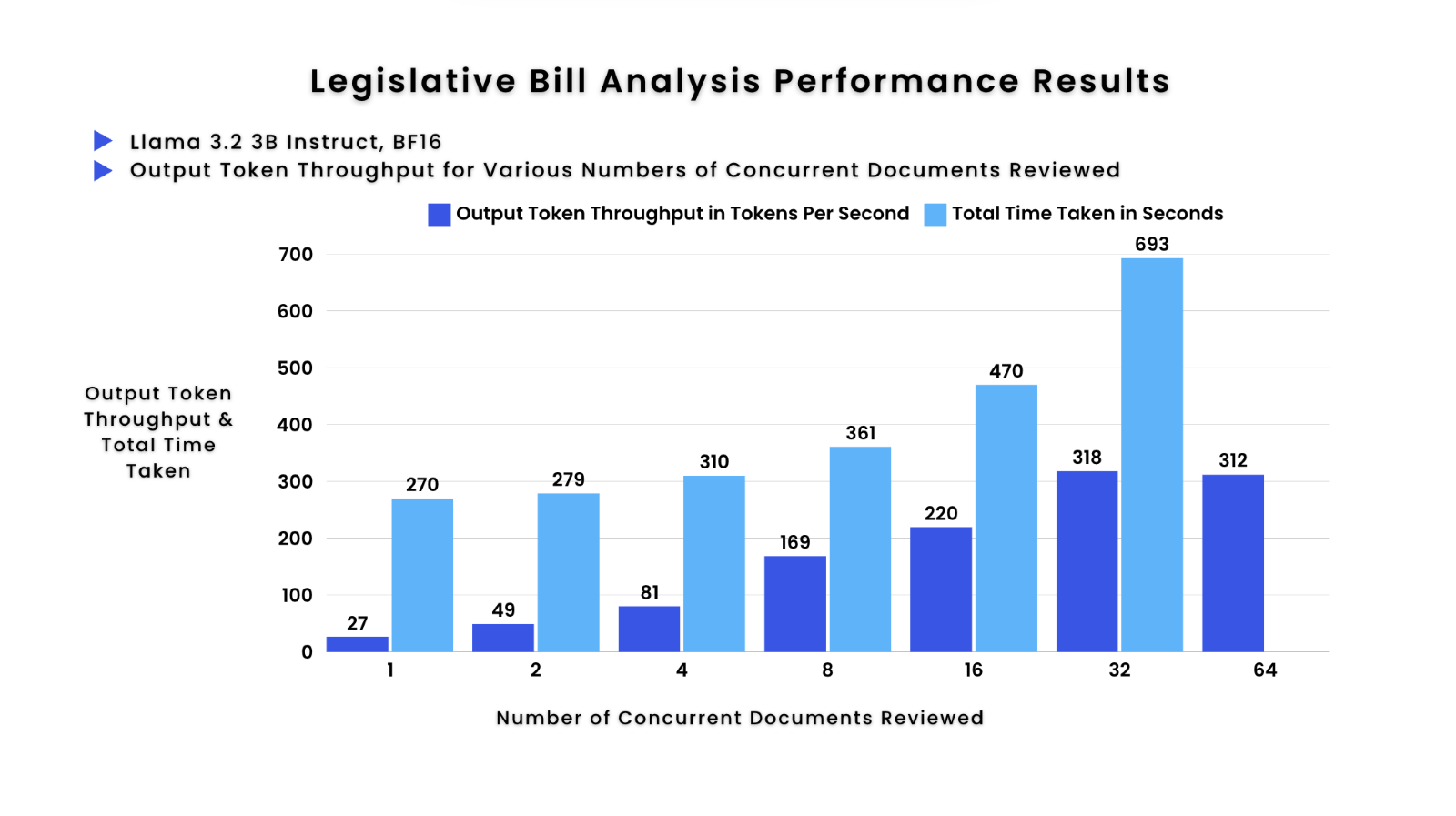

Now that we have completed vLLM-based tests focused solely on the model's performance, we turn our attention to evaluating the full AI agentic RAG stack within this workload. The results of our solution-specific performance tests demonstrate impressive scalability and efficiency in handling concurrent document analysis:

Figure 3. Performance results for legislative bill analysis through the solution, showing total system throughput in tokens/second and total time taken versus number of concurrent documents reviewed.

| Metric | Analyzing 1 Document | Analyzing 32 Concurrent Documents |

|---|---|---|

| Throughput | 27.2 Tokens/Second | 318.1 Tokens/Second |

| Total Time | 270 Seconds | 693 Seconds |

This shows an 11.69x increase in throughput, with only a 2.57x increase in the amount of time taken to process 1 versus 32 documents, re-emphasizing how AI agents excel at efficiently executing batched tasks such as document summarization. The system's ability to maintain high accuracy while dramatically increasing throughput ensures rapid delivery of critical insights, ultimately allowing organizations to go from hundreds of hours of human expert analysis happening last minute, to automated legislative analysis reviews being completed within an hour of new legislation announcements.

Hardware Configuration

To power this solution, we selected the Dell PowerEdge R7725 equipped with 5th Gen AMD EPYC 9755 128-Core processors, due to outstanding performance in vLLM-based tests and high speed DDR5 6000 memory, which are critical for running small language models (SLMs) such as Llama 3.2 3B. This hardware combination also excels at supporting various AI agents, the foundation of our legislative bill analysis solution, without compromising speed or accuracy.

Figure 4. Hardware Configuration Details.

| Component | Specification |

|---|---|

| Server | Dell PowerEdge R7725 |

| Processor | 2x AMD EPYC 9755 128-Core Processors |

| Memory | 2946 GB (3TB) of DDR5 Memory (Memory Speeds up to 6000 MT/s) |

| Drive Bays | Dell NVMe PM1743 RI E3.S 3.84TB |

| Networking | BCM57504 NetXtreme-E (10Gb-200Gb) Ethernet |

| OS | Ubuntu 24.04.1 LTS |

Solution Details and Workflow

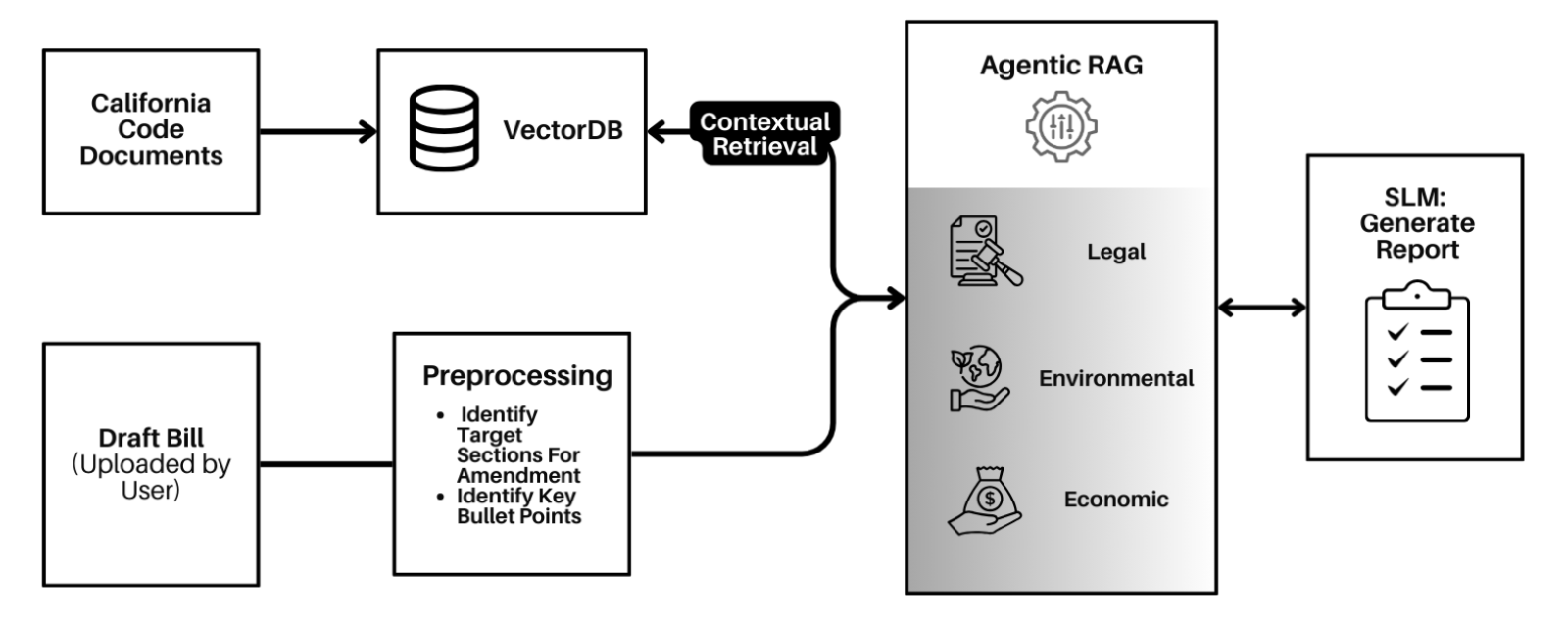

Let's dive into the core of this solution, an agentic retrieval-augmented generation (RAG) based workflow that automates critical aspects of the legislative bill analysis process. Agentic RAG extends traditional RAG by incorporating autonomous agents that can decompose complex tasks, maintain their own context, and execute targeted sub-tasks while coordinating with each other. This approach is integral to evaluating multiple key aspects of the legislation - identifying legal compliance issues, assessing economic impact, and analyzing social or environmental effects - while incorporating continuous learning to enhance accuracy over time. Below, we outline the step-by-step data flow of this system:

Figure 5. Solution Workflow.

Bill Upload and Preprocessing: Users upload draft bills or legislative documents into the system. During preprocessing, the system identifies key sections for amendment and extracts relevant bullet points summarizing the bill's purpose and provisions. The preprocessing stage also includes document chunking, semantic segmentation, and metadata extraction to optimize retrieval performance.

Contextual Retrieval via Vector Database: The system uses a vector database (VectorDB) to store and retrieve legislative context (California Code Documents) enabling comparison of the bill's provisions against existing codes. This contextual retrieval supports the AI agents in accurately identifying overlaps, conflicts, or specific areas requiring amendments. The vector embeddings are generated using an industry standard embeddings model, bge-large-en, to capture complex semantic relationships.

Agentic RAG Analysis: AI agents are deployed to focus on various dimensions of the bill, specifically legal, economic, and environmental impacts. These agents leverage the retrieved context to analyze the documents thoroughly. Each agent maintains its own memory state and reasoning chain, allowing for persistent context awareness throughout the analysis process. The agents communicate through a structured message-passing protocol, enabling collaborative analysis when issues span multiple domains. Each agent generates targeted insights for its domain - legal, economic, or environmental - which are then synthesized into actionable recommendations.

Comprehensive Report Generation: Once all agents complete their tasks, the system leverages an SLM, Llama 3.2 3B, to compile these findings and generate a detailed report, which includes a summary of the bill, key insights, and recommendations for legal, economic, and environmental considerations with specific references to the original sections in the draft bill. The report generation process implements a hierarchical summarization strategy, first consolidating agent-specific findings before synthesizing them into a cohesive narrative. The result is a fully synthesized report that aids lawmakers in making informed decisions.

This enhanced multi-agent RAG architecture significantly improves upon traditional single-agent RAG systems by enabling more comprehensive and nuanced analysis of complex legislative documents while maintaining scalability and reliability.

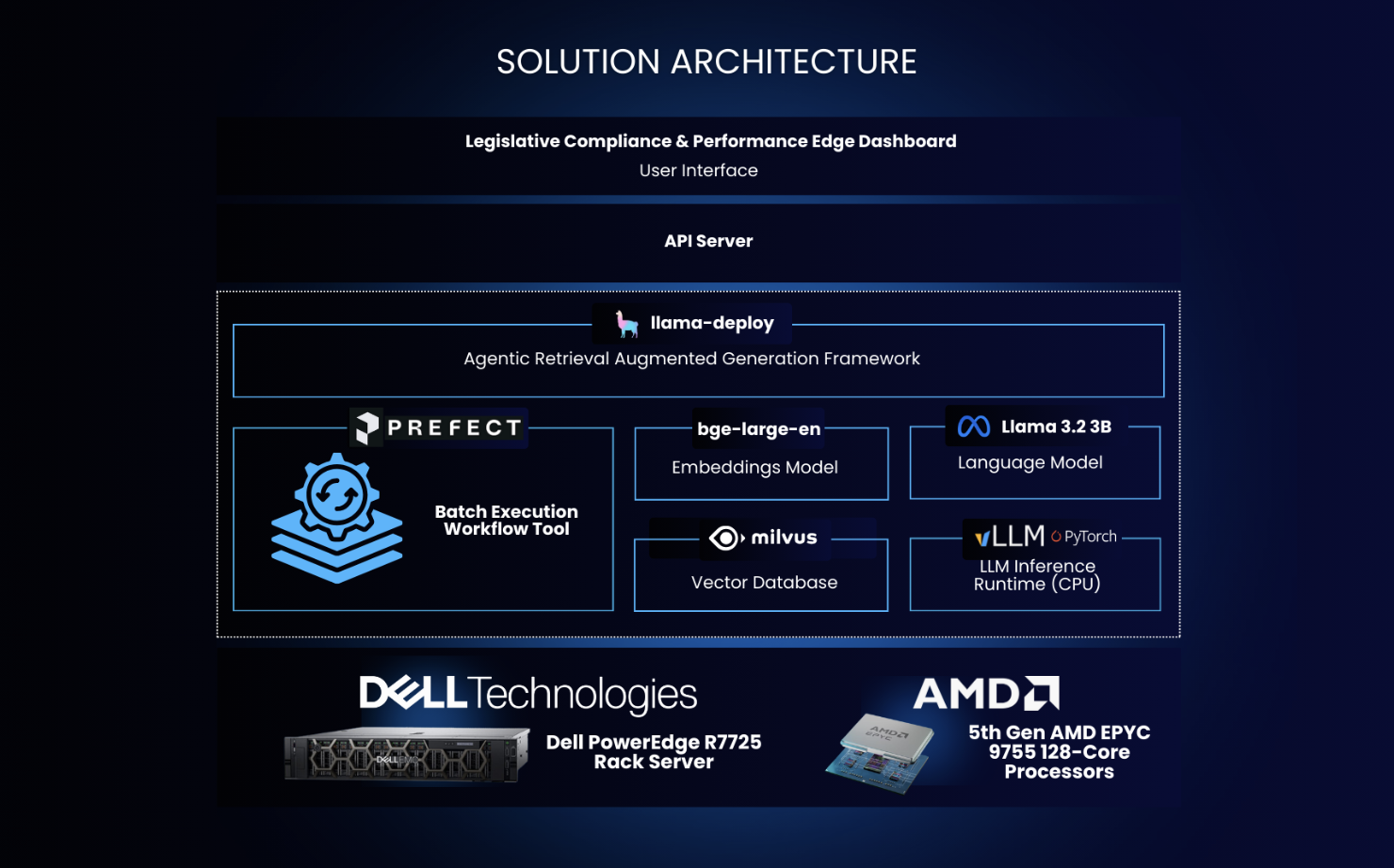

Solution Architecture

Figure 6. Solution Architecture.

To enable these solution capabilities, the software stack includes the following key components:

- vLLM (v0.5.3.post1), an industry-standard library for optimized open-source large language model (LLM) serving, with support for AMD ROCm 6.1.

- llama-deploy, an async-first framework for building, iterating, and productionizing multi-agent systems.

- Llama 3.2 3B Model, an industry-leading open-weight small language model with three billion parameters, served using vLLM with AMD ROCm optimizations.

- LlamaIndex, a popular open-source retrieval augmented generation framework.

- bge-large-en embeddings model, one of the top ranked text embeddings models running with Hugging Face APIs.

- MilvusDB, an open-source vector database with high performance embedding and similarity search.

Summary

As demonstrated in our implementation, government organizations can now overcome the challenges of processing large volumes of legislative bills by leveraging small language models (SLMs) and agentic retrieval-augmented generation (RAG) techniques. This solution streamlines legislative bill analysis by automating critical tasks like data collection, verification, and summarization - enabling organizations to obtain timely insights through the use of intelligent AI agents that operate semi-autonomously, ideal for offline, batched workloads.

Powered by the Dell PowerEdge R7725 server with 5th Gen AMD EPYC 9755 128-Core processors, this agentic RAG-based solution demonstrates how modern CPU-based infrastructure can efficiently handle advanced AI workflows. By running entirely on AMD EPYC processors, the solution allows organizations to maximize existing infrastructure and avoid costly hardware investments, while also supporting mixed workloads for greater versatility. This implementation has proven its scalability across various industries expanding beyond legislative insights, showcasing the broad applicability of CPU-based AI solutions.

To learn more about this solution or request access to our reference implementation, please contact us at contact@metrum.ai.

"The advantage of AI agents presents an unparalleled productivity opportunity, enabling AI to operate independently to accomplish tasks with minimal human intervention. With AI agents, we can run agentic RAG workloads or other offline, batched tasks entirely on CPUs, optimizing resource utilization and also significantly enhancing efficiency and scalability."

Chetan Gadgil, CTO at Metrum AI

Key Concepts

This solution leverages the following technical concepts:

Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) combines retrieval-based and generative NLP models to produce accurate, contextually relevant outputs by incorporating external knowledge from a database or corpus. Our legislative bill analysis solution leverages RAG to dynamically retrieve and integrate relevant legal, economic, and environmental data from uploaded code documents to generate detailed, fact-based summaries and insights. This ensures the solution provides timely, accurate analysis, streamlining decision-making processes for government agencies.

AI Agents and Agentic Workflows

AI agents are autonomous software tools that process information, make decisions, and take actions to achieve specific goals using techniques like machine learning and natural language processing. In agentic workflows, multiple AI agents collaborate, each with specialized roles, to break complex tasks into smaller steps for more accurate and efficient execution.

Our legislative bill analysis solution exemplifies this approach, employing AI agents for distinct tasks like legal, economic, and environmental impact assessments. These agents iteratively refine their outputs, ensuring detailed and reliable insights that support decision-making while streamlining the analysis process for government agencies.

Diving Into SLMs

Small Language Models (SLMs) offer high performance with lower computational demands, enabling real-time processing of legislative data without the use of specialized hardware. In this section, we provide more context on these compact models and their importance for resource-efficient, domain-specific applications.

Why SLMs?

SLMs are gaining significance as a more efficient and practical alternative to large language models (LLMs). Typically with 1-3 billion parameters, these models offer several advantages: they require less computational power and training data, are more cost-effective to develop and deploy, and can be fine-tuned for specific tasks or domains more easily. Recent research has shown that SLMs, such as Microsoft's Phi series, trained on high-quality data can achieve performance comparable to much larger models in certain tasks. While LLMs are suited for complex, general tasks requiring broad knowledge and language understanding, such as advanced chatbots, content generation, and open-ended question answering, SLMs are ideal for domain-specific applications where efficiency, low latency, and resource constraints are critical, such as real-time language processing on mobile devices or targeted tasks in healthcare or customer support.

SLMs on EPYC

SLMs are driving AI adoption across a broad range of industry-specific use cases, utilized in predictive maintenance systems for vehicles, human interaction scenarios, such as chatbots and virtual assistants, or fine-tuned to understand industry-specific terminology and common customer requests in customer service scenarios. AMD EPYC CPUs provide an ideal platform for running SLMs, especially for mixed AI workloads, offering a cost-effective solution for businesses with existing CPU infrastructure. Their versatility supports seamless integration of AI tasks like inference with traditional computing workloads, avoiding the need for significant infrastructure changes. While GPUs excel in compute-heavy tasks like training large models, AMD EPYC CPUs provide strong performance for lightweight AI and mixed-use cases, making them a practical choice for businesses not ready to overhaul their systems for AI adoption.

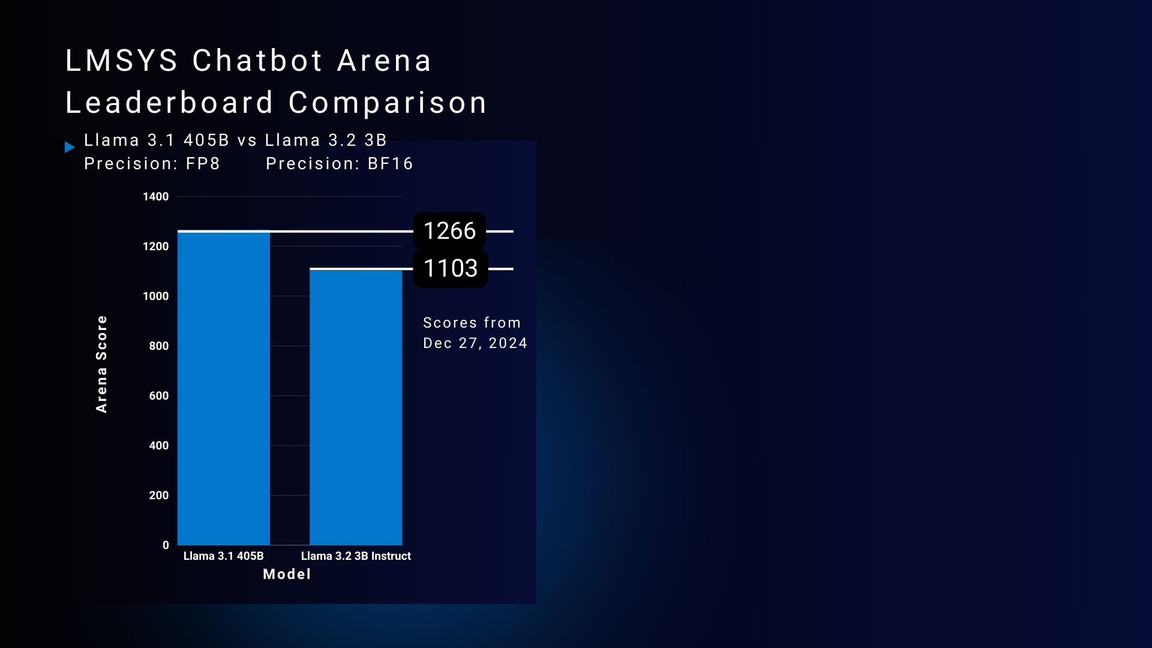

SLMs: Performance vs Cost

To understand why small language models are an effective and cost-efficient alternative to industry-leading large language models, we can take a closer look at model accuracy when deployed on GPUs through the LMSYS Chatbot Arena Leaderboard, a crowdsourced open platform for LLM evaluation. Llama 3.2 3B Instruct, requiring only one state-of-the-art GPU for deployment, receives an Arena Score of 1103, while Meta's Llama 3.1 405B Instruct Model in FP8 Precision, requiring eight state-of-the-art GPUs for deployment, receives an Arena Score of 1266. For over 80% of the performance of the large language model, Llama 3.2 3B demands significantly less hardware, while still seamlessly supporting hundreds of concurrent users for a much more affordable cost. Once fine-tuned on industry specific data or integrated into RAG pipelines, small language models can even outperform large language models on industry specific use cases, ultimately providing a much more advantageous cost to performance tradeoff.

Figure 7. LMSYS Chatbot Arena Leaderboard Score Comparison of Llama 3.1 405B with FP8 Precision and Llama 3.2 3B with BF16 Precision.

References

- AMD images: AMD.com, AMD Partner Resource Library, https://www.amd.com/en/partner/resources/resource-library.html

- Dell images: Dell.com

- https://www.microsoft.com/en-us/research/blog/phi-2-the-surprising-power-of-small-language-models/

Copyright © 2025 Metrum AI Inc. All Rights Reserved. Metrum AI, the Metrum AI logo, and other trademarks are trademarks of Metrum AI Inc. The analysis in this document was conducted by Metrum AI Inc. and commissioned by Dell Technologies.

Dell Technologies, Dell, Dell PowerEdge, Dell logo, and other trademarks are trademarks of Dell Inc. or its subsidiaries. AMD, AMD logo, AMD EPYC, AMD ROCm, and combinations thereof are trademarks of Advanced Micro Devices, Inc.

Other trademarks may be the property of their respective owners.

DISCLAIMER: Performance varies by hardware and software configurations, including testing conditions, system settings, application complexity, the quantity of data, batch sizes, software versions, libraries used, and other factors. The results of performance testing provided are intended for informational purposes only and should not be considered as a guarantee of actual performance.

Metrum AI believes the information in this document is accurate as of its publication date. The information is subject to change without notice.