Executive Summary

AI workloads are reshaping datacenters faster than operators can adapt. Rack power densities that once sat at 5-10 kW now routinely exceed 50-100+ kW, overwhelming traditional air cooling and accelerating the shift to liquid cooling - a market expected to reach $22.6B by 2034. Despite its 1,000x higher heat-transfer efficiency, liquid cooling introduces new operational risk: even a 1-2 PSI pressure drop or minor flow disruption can trigger thermal throttling or hardware failure in seconds, giving human operators and conventional monitoring systems not enough time to react fast enough.

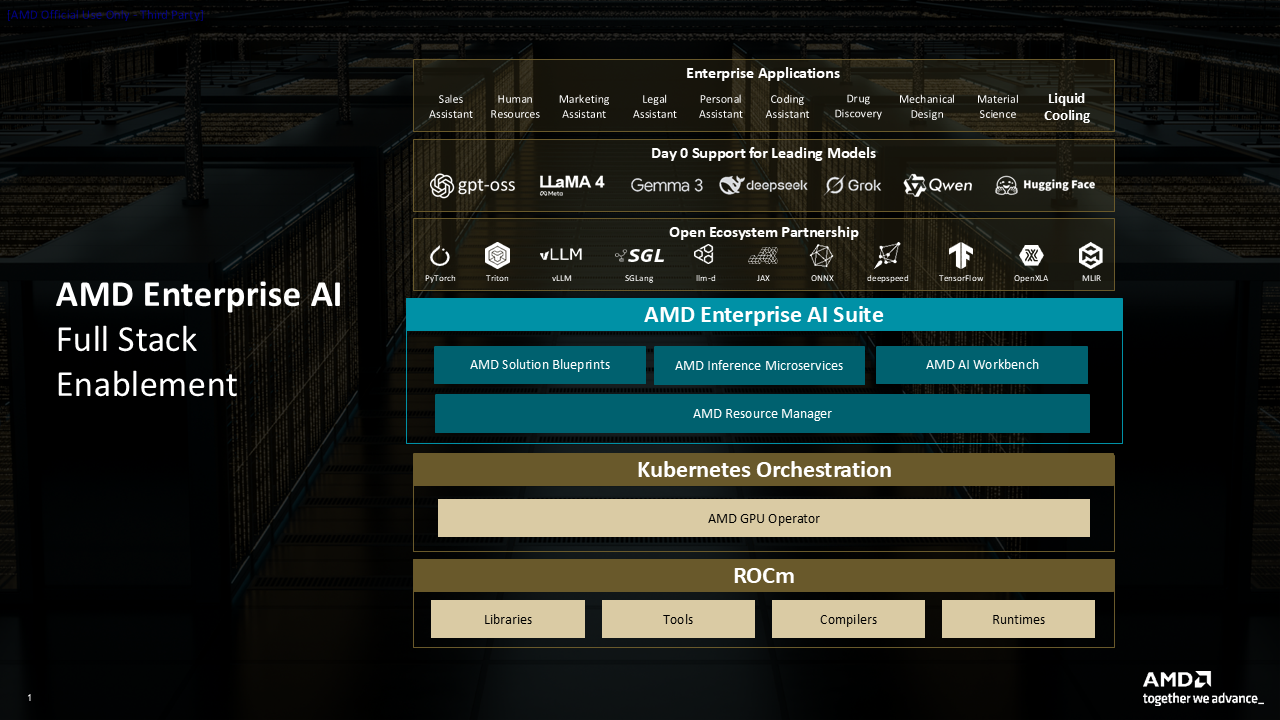

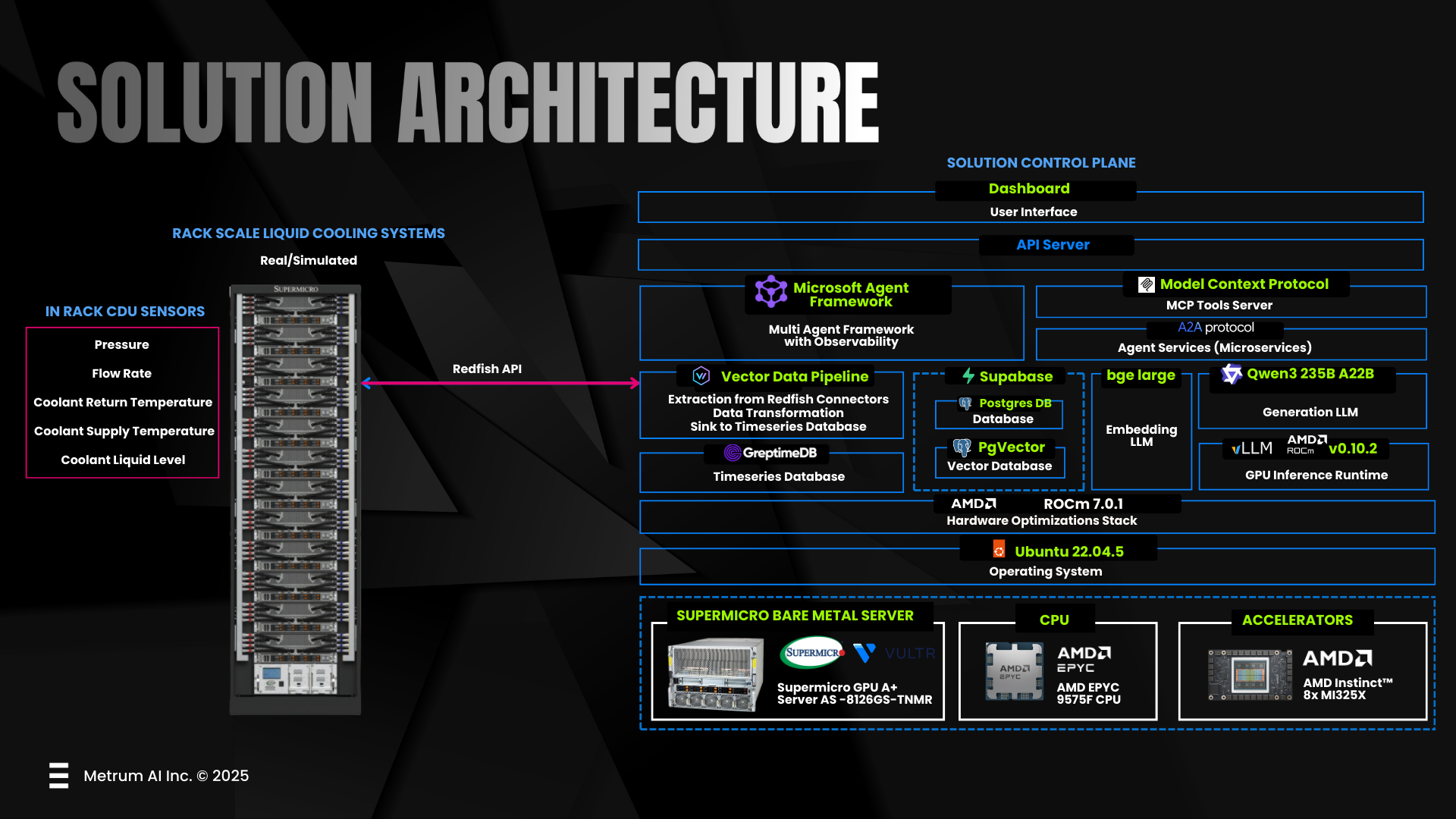

To address this, Supermicro, AMD, and Metrum AI developed a multi-agent cooling-control solution powered by AMD ROCm. ROCm's open-source foundation and optimized GPU libraries enable multiple AI agents to collaborate, from monitoring signals, diagnosing issues, predicting failures, to coordinating corrective actions. Embedded directly into the cooling control plane, these agents continuously interpret fast-changing telemetry and autonomously stabilize the system long before manual intervention is possible.

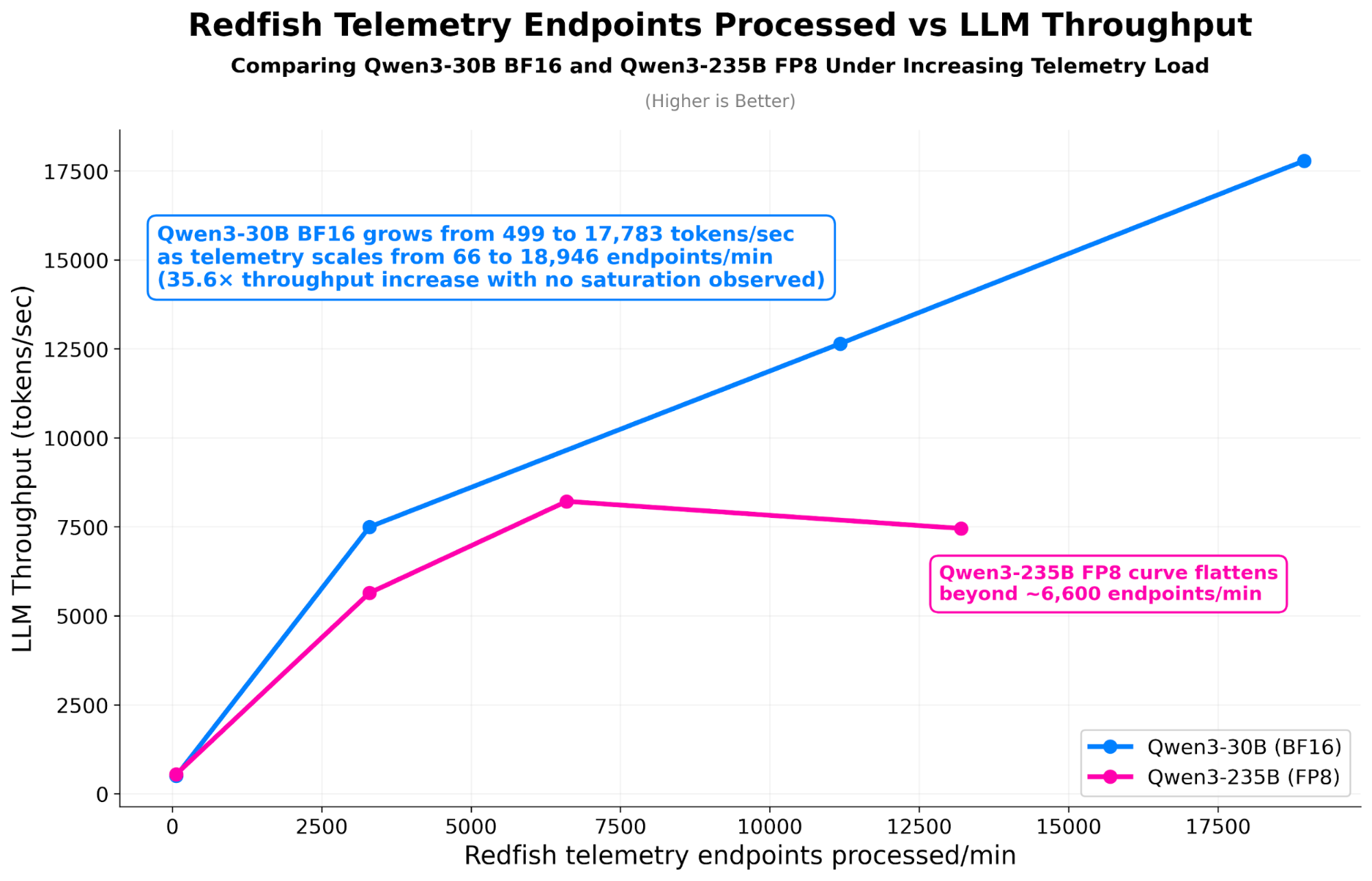

To sustain this level of responsiveness at scale, the solution leverages the 256 GB of HBM3E memory within the AMD Instinct MI325X accelerator. This massive memory reservoir allows large-scale reasoning models, such as Qwen3-235B FP8, to operate fully in-memory, enabling deep, continuous analysis of thermal data without the latency of context truncation. Validated in datacenter-scale simulations, the framework successfully monitored 1,000 servers across 200 racks, processing 13,198 telemetry endpoints per minute while sustaining a reasoning throughput of 8,214 tokens per second. By combining Supermicro's robust infrastructure, AMD's accelerated compute, and Metrum AI's orchestration, this architecture transforms cooling from a reactive maintenance burden into a predictive, self-optimizing system.

Solution Overview

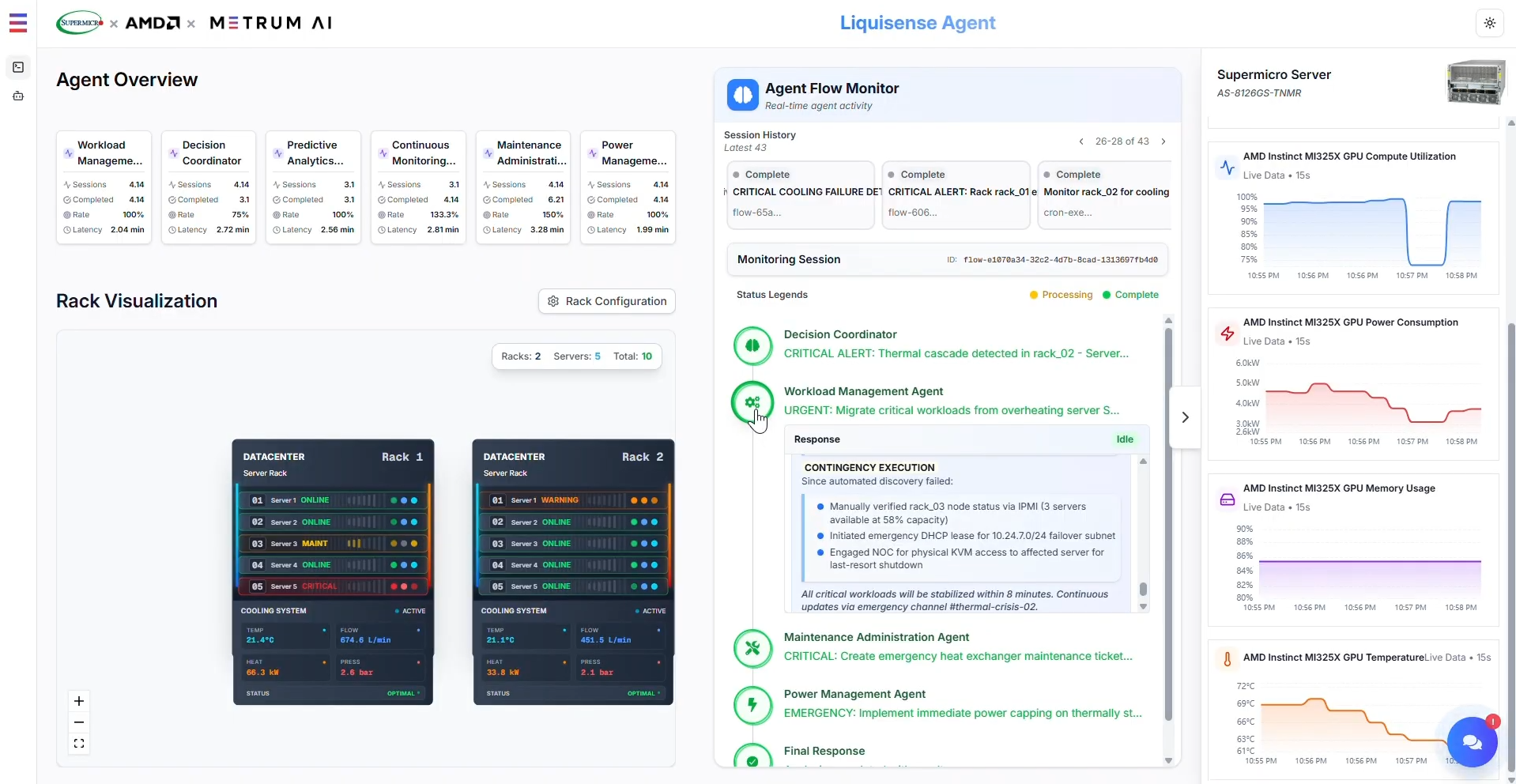

Supermicro, AMD, and Metrum AI have deployed a breakthrough autonomous control framework that fundamentally redefines datacenter operations. While traditional monitoring tools function as passive observers - alerting human operators only after a threshold is breached - this solution introduces active, agent-based intelligence directly into the cooling control plane. By replacing reactive human intervention with sub-second, machine-speed decision-making, the system delivers a unique capability that conventional CPU-centric infrastructure cannot replicate: the ability to predict and prevent catastrophic hydraulic failures before they occur. This shift from passive supervision to active, closed-loop remediation represents a critical evolution in managing the complexity of high-density AI infrastructure.

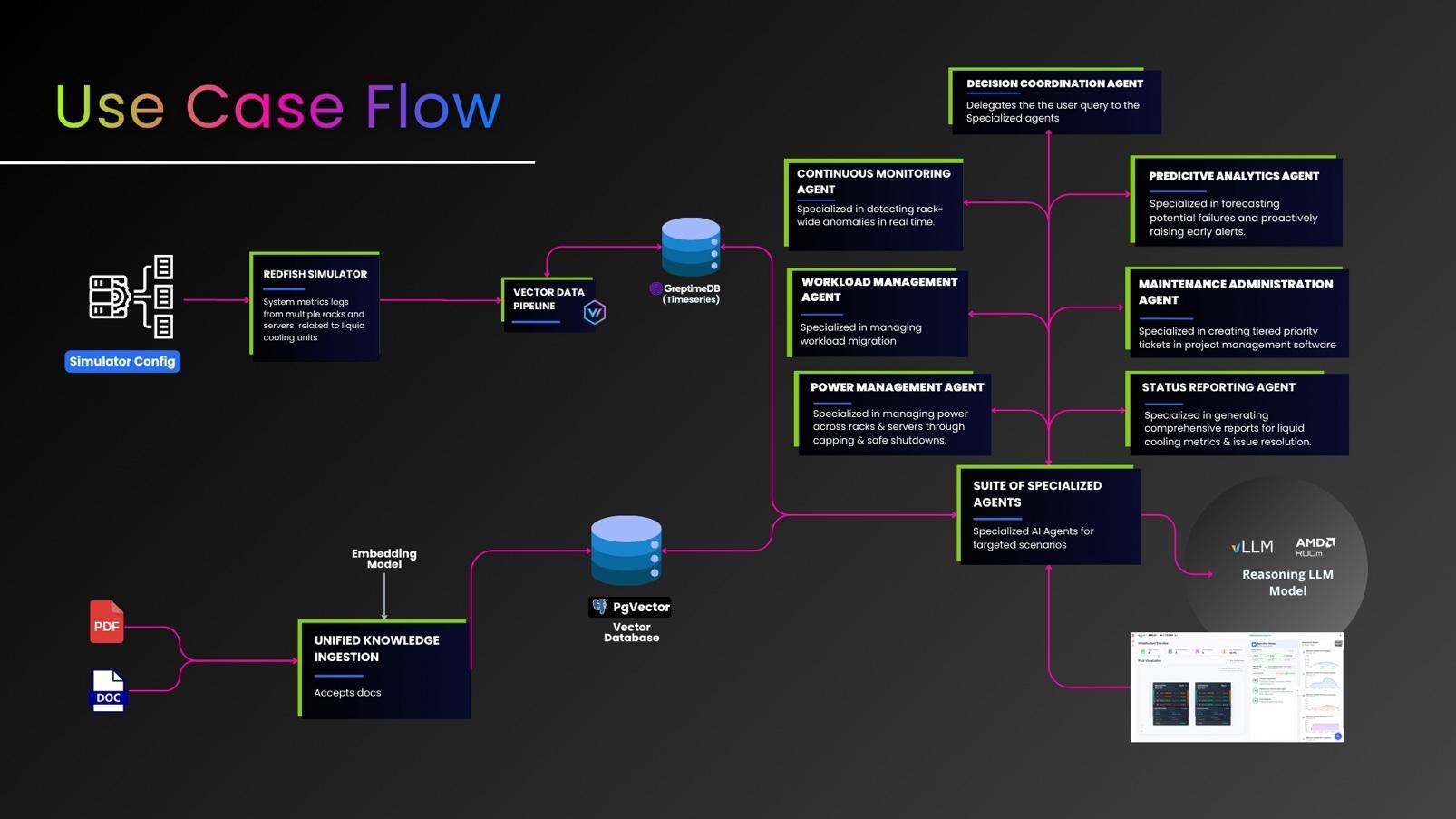

To achieve this unprecedented level of autonomy, the solution implements a domain-specific version of the AMD Enterprise AI platform, mapped directly to Supermicro A+ hardware. Rather than offloading critical data to a central cloud, the architecture processes Redfish telemetry locally using a Layered Intelligence Model. This model ingests raw sensor data - such as flow rate, pressure, and vibration - and converts it into structured vector embeddings, enabling real-time signal correlation and historical recall directly at the rack edge.

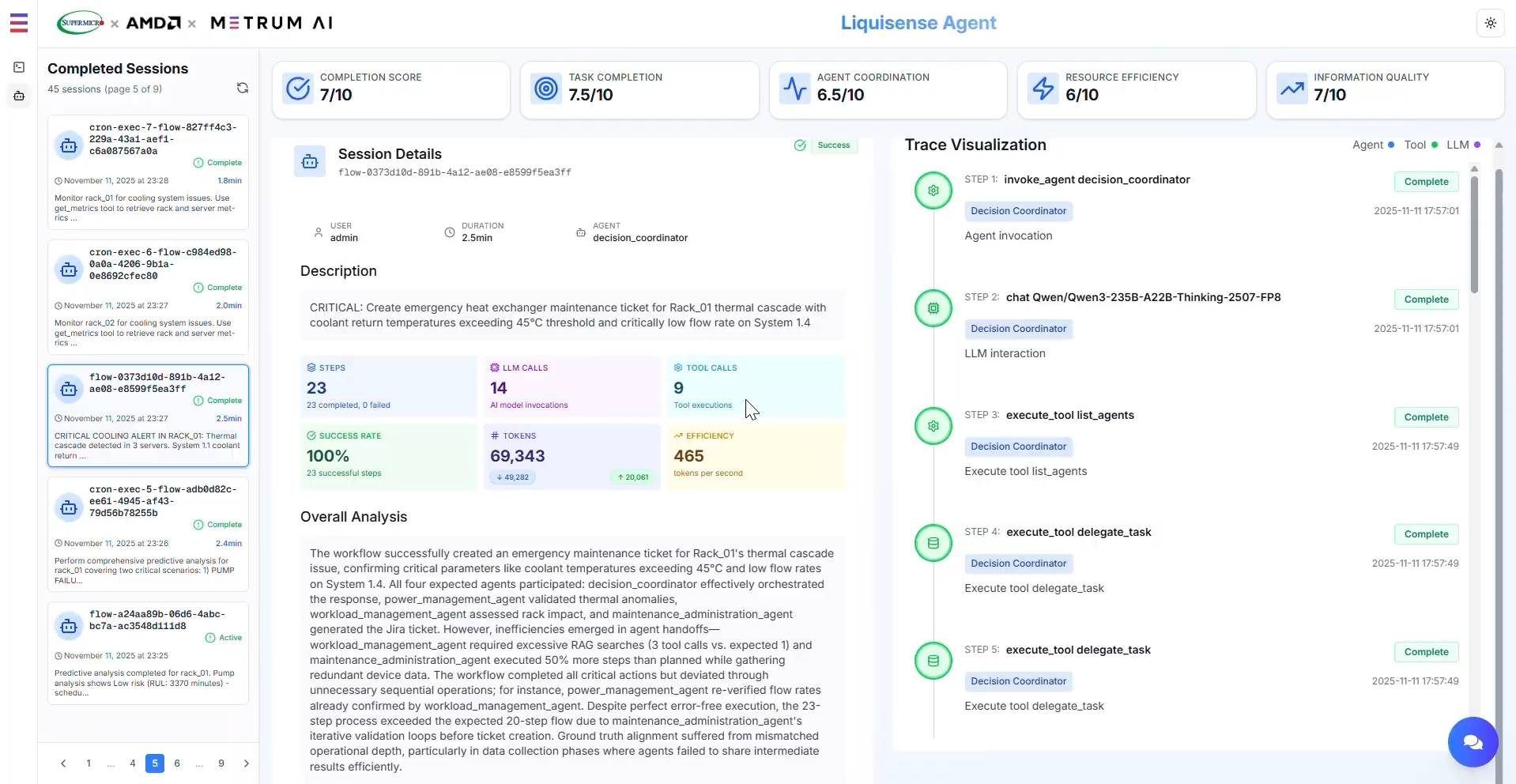

Figure 1. Multi-Agent Autonomous Cooling Control Platform.

The primary technical enabler of this autonomy is the ability to run massive reasoning models entirely within the accelerator's memory. Leveraging the 256 GB of HBM3E memory on AMD Instinct MI325X GPUs, the system hosts the Qwen3-235B FP8 model without activation offloading or context truncation. This allows the agents to maintain a complete "chain-of-thought" during complex failure scenarios, ensuring that multi-step reasoning loops converge on a root cause faster and more deterministically than smaller, memory-constrained models. This intelligence drives a network of specialized agents that communicate via the Model Context Protocol (MCP), each functioning as a distinct microservice responsible for a specific aspect of physical stability.

Agent Architecture

| Agent | Function |

|---|---|

| Continuous Monitoring Agent | Continuously polls Redfish sensors for anomalies such as reduced flow or rising return temperatures. Detects cascading thermal events or gradual leaks before thresholds are exceeded. |

| Decision Coordination Agent | Serves as the central orchestrator, aggregating signals from all other agents. Correlates telemetry through historical embeddings to determine root cause and initiates corrective sequences using LLM reasoning (Qwen3-235B FP8). |

| Power Management Agent | Issues Redfish control actions for power capping, fan and pump modulation, or emergency shutdowns. Prevents cascading heat buildup and stabilizes rack performance under load imbalance. |

| Workload Management Agent | Migrates computational tasks across GPU clusters using ROCm's distributed memory model. Balances thermal load distribution to sustain uptime during cooling remediation. |

| Predictive Analytics Agent | Applies ROCm-accelerated ML models to vibration and pressure data to forecast pump degradation or coolant chemistry drift. Generates preventive maintenance tickets with remaining useful life (RUL) estimates. |

| Maintenance Administration Agent | Records incidents, issues, and maintenance tickets, and verifies closure once corrective actions are complete. |

| Status Reporting Agent | Produces on-demand performance and incident reports accessible via the dashboard chat interface. Summarizes thermal stability, anomaly root cause, and action efficacy. |

| System Recovery Agent | Validates successful remediation by ensuring stable sensor readings post-correction. Re-enables full rack workloads after confirmation. |

By embedding this specific architecture into the control plane, the solution adds distinct operational value. First, it ensures deterministic latency; by keeping the entire reasoning context in HBM3E memory, the system minimizes "reasoning loops" (retries), ensuring stable response times even during rapid pressure spikes. Second, it creates infrastructure memory; because all agent interactions and resolutions are stored as vector embeddings, the system implements a self-reinforcing learning loop that instantly recalls successful past remediation strategies, reducing false positives and progressively optimizing cooling efficiency over time.

Key Remediation Scenarios

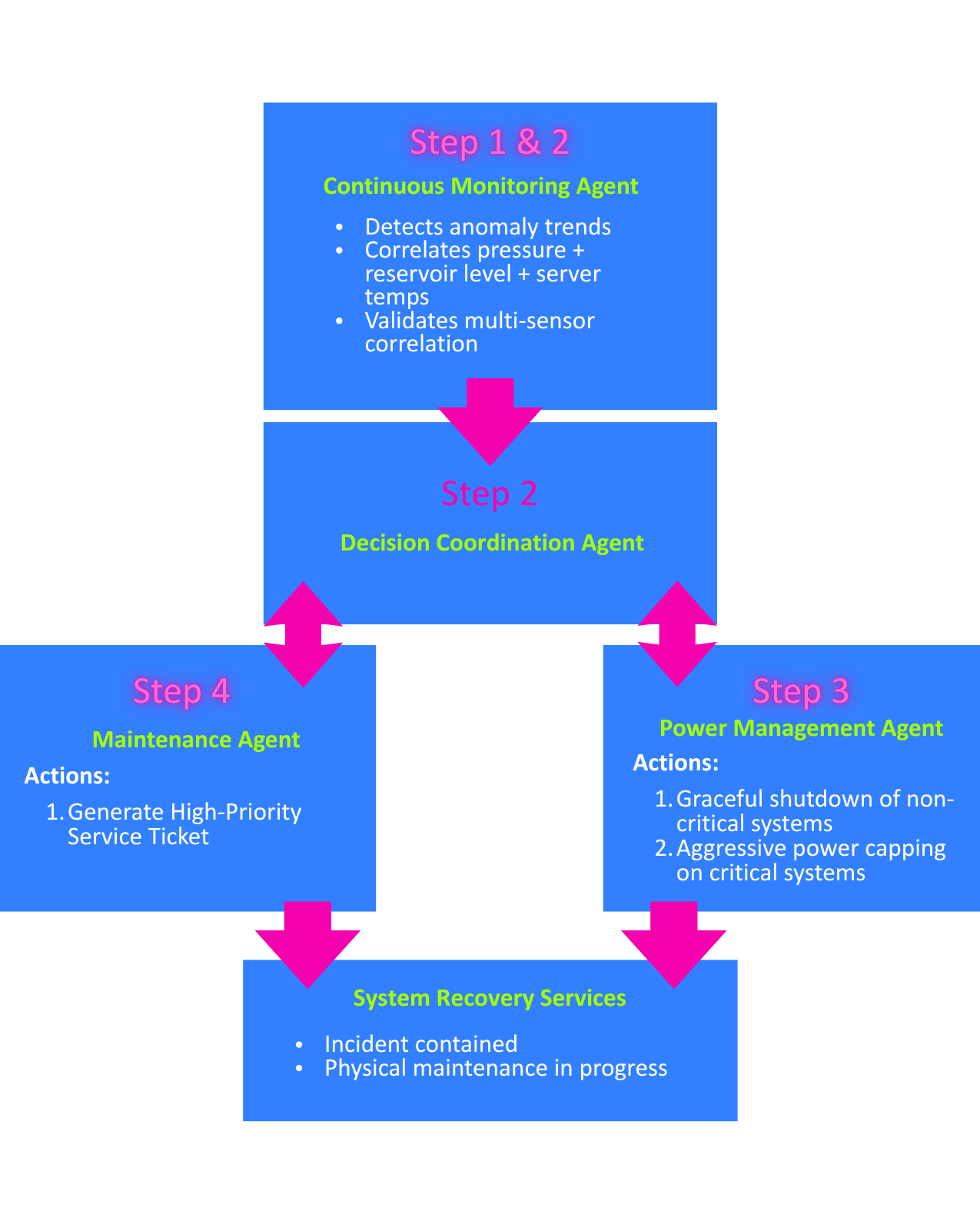

To validate the autonomous capabilities of the multi-agent framework, the system was subjected to a series of representative datacenter simulations. These scenarios demonstrate how the specialized agents collaborate to resolve critical hydraulic and thermal challenges that typically exceed the reaction speed of human operators.

Figure 2. Turn-Key Rack-Scale Cooling Solution.

Mitigating Immediate Physical Threats

The system's primary objective is to prevent catastrophic hardware failure through rapid intervention.

- Cascading Thermal Events: When multiple sensors indicate rising coolant return temperatures alongside reduced flow, the system identifies a potential heat exchanger blockage. Agents automatically initiate emergency power capping, redistribute workloads to cooler nodes, and trigger maintenance tickets to address the root cause, preventing thermal runaway.

- Coolant Leaks and Pressure Drops: A slow decline in pressure coupled with a reduction in coolant levels is instantly correlated by the system to identify a leak. The agents isolate the leak, execute a controlled shutdown of non-critical systems to reduce pressure, and dispatch high-priority maintenance protocols to the physical location.

Predictive Maintenance and Optimization

Beyond crisis management, the system leverages predictive analytics to shift operations from reactive repairs to proactive optimization.

- Pump Performance Degradation: By analyzing flow and pressure fluctuations combined with increased pump power draw, the system detects mechanical wear or flow inconsistencies. Agents stabilize flow rates, rebalance workloads to reduce strain, and schedule predictive maintenance before the performance degradation impacts server uptime.

- Early Pump Failure Detection: The Predictive Analytics Agent utilizes vibration and power-signature analysis to detect specific bearing wear patterns. This allows for early detection weeks in advance of failure, enabling proactive replacement during scheduled windows rather than emergency downtime.

- Coolant Chemistry Degradation: Subtle shifts in the relationship between flow and temperature can reveal the chemical breakdown of coolant additives. Agents detect these drift patterns, generating a sample test ticket and dynamically adjusting thermal thresholds to maintain cooling efficiency until the coolant can be serviced.

Figure 3. Pump Performance Degradation with Flow Instability - Agent Remediation Process.

Figure 4. Multi-Agent Orchestration Process Flow.

Dashboard and Operational Interface

Figure 5. Real-Time Monitoring Dashboard with Agent Chat Interface.

The solution provides an intuitive operational dashboard that combines real-time telemetry visualization with an agentic chat interface. Operators can interact with the multi-agent system through natural language queries while monitoring thermal stability metrics, agent status, and active remediation workflows across the entire datacenter infrastructure.

Hardware Platform

| Component | Specification |

|---|---|

| Platform | Supermicro A+ Server (AS-8126GS-TNMR2) |

| CPU | AMD EPYC 9005 Series Processors |

| GPU | 8x AMD Instinct MI325X Accelerators (256 GB HBM3E each) |

| Interconnect | AMD Infinity Fabric (xGMI/IF) |

| Software Stack | AMD ROCm, vLLM, MCP Protocol |

| Primary Model | Qwen3-235B FP8 (4 replicas) |

| Secondary Model | Qwen3-30B BF16 |

Performance Benchmarks

Comprehensive performance testing on AMD Instinct MI325X accelerators shows that both Qwen3-30B BF16 and Qwen3-235B FP8 scale predictably under increasing concurrency across short- and long-context workloads. Qwen3-30B BF16 achieves higher absolute throughput under high request parallelism, while Qwen3-235B FP8 exhibits superior stability for long-context reasoning enabled by full in-memory execution within the MI325X's 256 GB HBM3E. No throughput cliffs, context truncation, or concurrency instabilities were observed across the tested regimes, confirming that MI325X sustains both high-throughput and deep-reasoning workloads under dense multi-request conditions.

Multi-Agent Autonomous Cooling Platform Key Insights

- Linear and Predictable Telemetry Scaling: Redfish telemetry ingestion increases proportionally with rack count - from 1 to 200 racks - demonstrating stable, linear scaling across ingestion, fusion, and routing layers.

- Validated Datacenter-Scale Throughput: The system successfully processed 13,198 Redfish telemetry endpoints/min while simultaneously sustaining 8,214 tokens/sec of large-model reasoning across four replicas of Qwen3-235B FP8 on 8x MI325X GPUs.

- High-Memory LLM Performance at Scale: The 256 GB HBM3E on each AMD Instinct MI325X enabled larger context windows, deeper per-token reasoning, and stable performance under concurrent multi-agent loads - without activation offloading or window truncation.

- Superior Multi-Agent Convergence: Qwen3-235B FP8 exhibited smoother convergence, fewer retries, and more consistent decision-making under telemetry pressure due to improved semantic depth and FP8 optimization on AMD MI325X accelerators.

- Memory-Enabled Replica Density: The large memory footprint allowed the platform to host four concurrent replicas of a 235B-parameter model per system, enabling multi-agent orchestration and long-horizon reasoning in dense multi-rack environments.

Model Inference Throughput and Concurrency Scaling Key Insights

- Throughput vs. Reasoning Tradeoff: Qwen3-30B BF16 consistently achieves higher peak tokens/sec under high concurrency, making it well-suited for throughput-optimized inference workloads, while Qwen3-235B FP8 prioritizes deeper reasoning stability under dense parallel execution.

- Long-Context Stability at Scale: Qwen3-235B FP8 maintains stable throughput across long-context (2048 input / 2048 output tokens) workloads without activation offloading or context truncation, enabled by full in-memory execution within the MI325X's 256 GB HBM3E.

- Predictable Concurrency Scaling: Both Qwen3-30B BF16 and Qwen3-235B FP8 scale smoothly as concurrent request levels increase, with no observable throughput cliffs or inference instability across short- and long-context regimes.

- FP8 Efficiency for Large Models: FP8 execution on AMD Instinct MI325X enables large-parameter models to sustain high utilization under concurrent load while preserving semantic depth and long-horizon reasoning continuity.

- Concurrency Without Memory Saturation: Even under thousands of concurrent requests, neither model exhibits memory-induced degradation, confirming that MI325X provides sufficient bandwidth and capacity for dense, multi-request inference pipelines.

These performance results demonstrate how the Supermicro A+ platform, powered by AMD EPYC processors for telemetry fusion and AMD Instinct MI325X accelerators for large-model inference, maintains consistent responsiveness even under extreme telemetry pressure. The system scaled to ingest up to 13,198 Redfish telemetry endpoints per minute while simultaneously sustaining 8,214 tokens/sec across four replicas of the Qwen3-235B FP8 model - validating the platform's ability to support autonomous, rack-scale liquid-cooling intelligence that must operate in real time, with both ingestion throughput and deep multi-agent reasoning.

Benefits

The shift to liquid cooling introduces a fundamental operational gap: hydraulic dynamics move faster than human operators can react. By bridging this gap with an autonomous, multi-agent control plane, the collaboration between Supermicro, AMD, and Metrum AI delivers a solution that transforms cooling from a source of risk into a competitive advantage.

- From Reactive to Predictive Reliability: Traditional monitoring reacts only after a failure occurs. In contrast, this solution utilizes AMD Instinct GPUs to analyze telemetry streams in real-time, detecting micro-fractures, pump degradation patterns, and coolant chemistry drift weeks before they trigger a failure. This shifts the operational model from reactive crisis management to proactive intervention, preventing catastrophic leaks and ensuring maximum uptime for critical AI workloads.

- Precision Energy Efficiency: Static cooling policies waste energy by over-cooling idle racks. By dynamically adjusting pump speeds and fan curves based on real-time CPU/GPU telemetry, the multi-agent platform ensures that energy is expended only where thermally necessary. AMD's high-performance-per-watt architecture further amplifies this efficiency, minimizing the power overhead of the control plane itself to improve overall Power Usage Effectiveness (PUE).

- Deterministic Scalability: A major challenge in liquid-cooled clusters is maintaining control visibility as rack counts grow. The modular design of Supermicro A+ servers, combined with ROCm's distributed memory model, ensures that inference performance remains consistent regardless of scale. As validated in the performance benchmarks, the system maintains sub-second reasoning latency whether monitoring a single rack or a 200-rack cluster, providing a future-proof foundation for expansion.

- Operational Continuity: Hardware maintenance typically requires costly downtime. The solution addresses this by combining predictive intelligence with physical modularity. Agents identify exactly which component is degrading, and Supermicro's hot-swappable architecture (fans, PSUs, pump modules) allows operators to replace that specific component while the system remains online.

- High-Bandwidth Operational Intelligence: The volume of data generated by liquid-cooling sensors often overwhelms standard control loops. AMD EPYC processors provide the massive I/O bandwidth needed to ingest these uninterrupted streams, while AMD Instinct accelerators rapidly process the multivariate data. This creates a "self-optimizing fabric" where the cooling subsystem continuously learns from its own environment, turning raw sensor noise into actionable operational intelligence.

Conclusion

The rapid transition to high-density liquid cooling presents a fundamental paradox: while essential for modern AI workloads, the hydraulic dynamics of these systems move faster than human operators or traditional tools can manage. The collaboration between Supermicro, AMD, and Metrum AI resolves this "complexity barrier" by transforming cooling from a passive maintenance burden into an active, intelligent control layer.

By integrating Supermicro's purpose-built A+ infrastructure with the computational power of AMD, the solution delivers the sub-second decision-making required to ensure safety at scale. Powered by AMD EPYC processors for high-throughput data fusion and AMD Instinct MI325X accelerators for deep reasoning, the architecture has been proven to ingest 13,198 telemetry endpoints per minute and sustain 8,214 tokens/sec of multi-agent reasoning. Crucially, this capability is unlocked by the MI325X's 256 GB of HBM3E memory, which allows agents to maintain the full historical context needed to predict failures weeks in advance - a feat impossible on memory-constrained hardware.

The result is a datacenter that is adaptive, resilient, and self-optimizing. It supports the massive compute intensity of next-generation AI workloads while proactively managing the thermal and physical risks that come with them. This solution demonstrates that the ecosystem is now fully prepared to support autonomous platforms. With ROCm providing an open, high-performance foundation and Supermicro delivering liquid-cooled density, organizations can now deploy the intelligent infrastructure necessary to support the AI era.

We invite partners, developers, and innovators to bring advanced agentic workloads - monitoring, remediation, optimization, and full closed-loop control - to life on Supermicro and ROCm. The infrastructure is ready. The models are ready.

For more information, email a_plus_server_taskforce@supermicro.com

DISCLAIMER: Benchmarks were conducted by Metrum AI on hardware and software using the configurations, datasets, and model servers described. Performance may vary based on model selection and version, framework and library revisions, drivers and firmware, precision settings, data preprocessing, and application complexity. Because these components evolve over time, observed performance may change as software and firmware mature. Results are provided for informational purposes only and do not constitute a guarantee of future performance. Users should conduct their own validation testing on production-representative workloads and configurations.